Primary artifact

Ten-page PRD

A working prototype + a 30-pair eval set

Product Builder OS is the working spec for the role LinkedIn just minted. Six skills, one career ladder, a 35-chapter companion handbook.

You got fired as a Product Manager. Rehired as a Product Builder.

— From the manifesto: The role got rewritten →

LinkedIn owns the title — their Associate Product Builder program rebranded the famous APM track in 2025. Product Builder OS is the working methodology that goes underneath it: what the role actually does, day to day, when you are good at it.

Not frameworks. Workflows. Each skill is the exact day-to-day discipline of a working Product Builder, with a Claude Code recipe attached.

If you can't build it in hours, you're a PM. If you can, you're a Product Builder.

AI compresses build time toward zero. Insight is the only durable edge.

You don't design RAG. You decide whether you need it.

Did the spec get built? Wrong question. Did the customer's problem get solved? Right question.

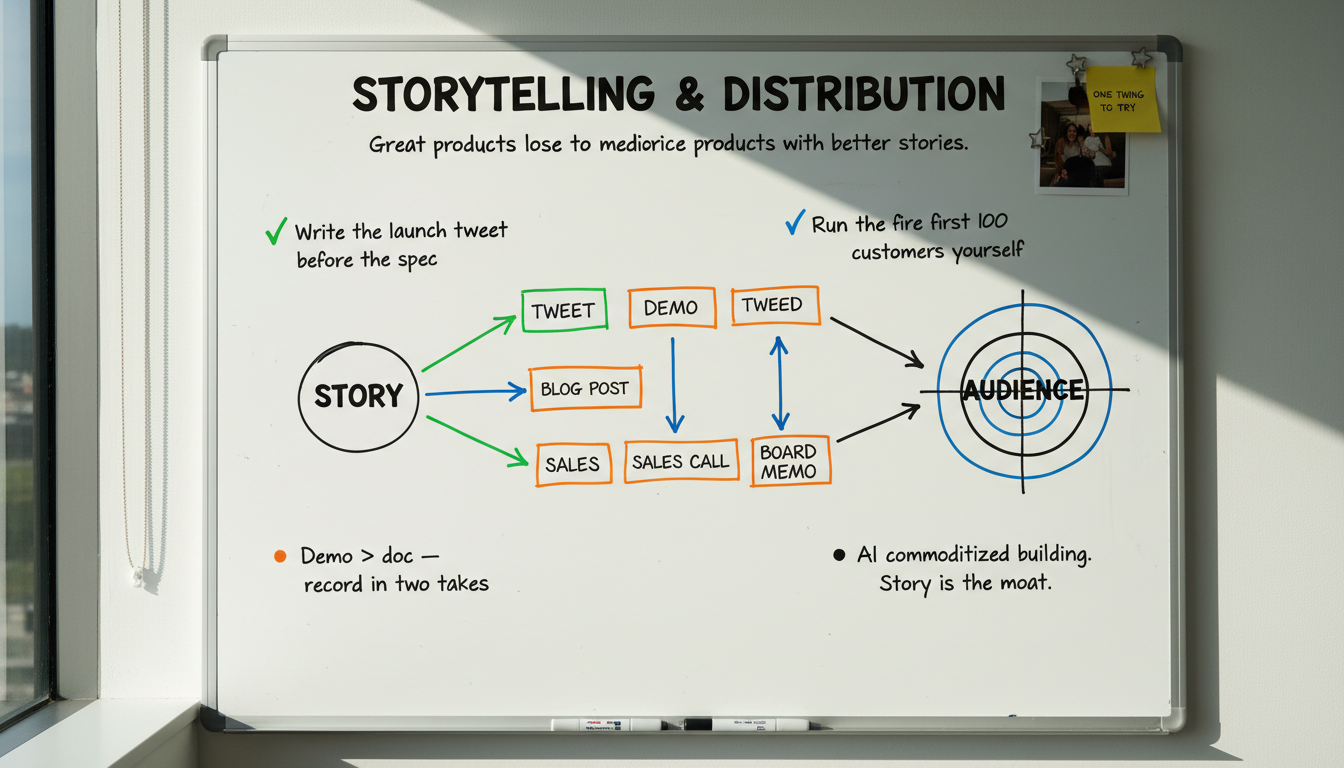

Great products lose to mediocre products with better stories.

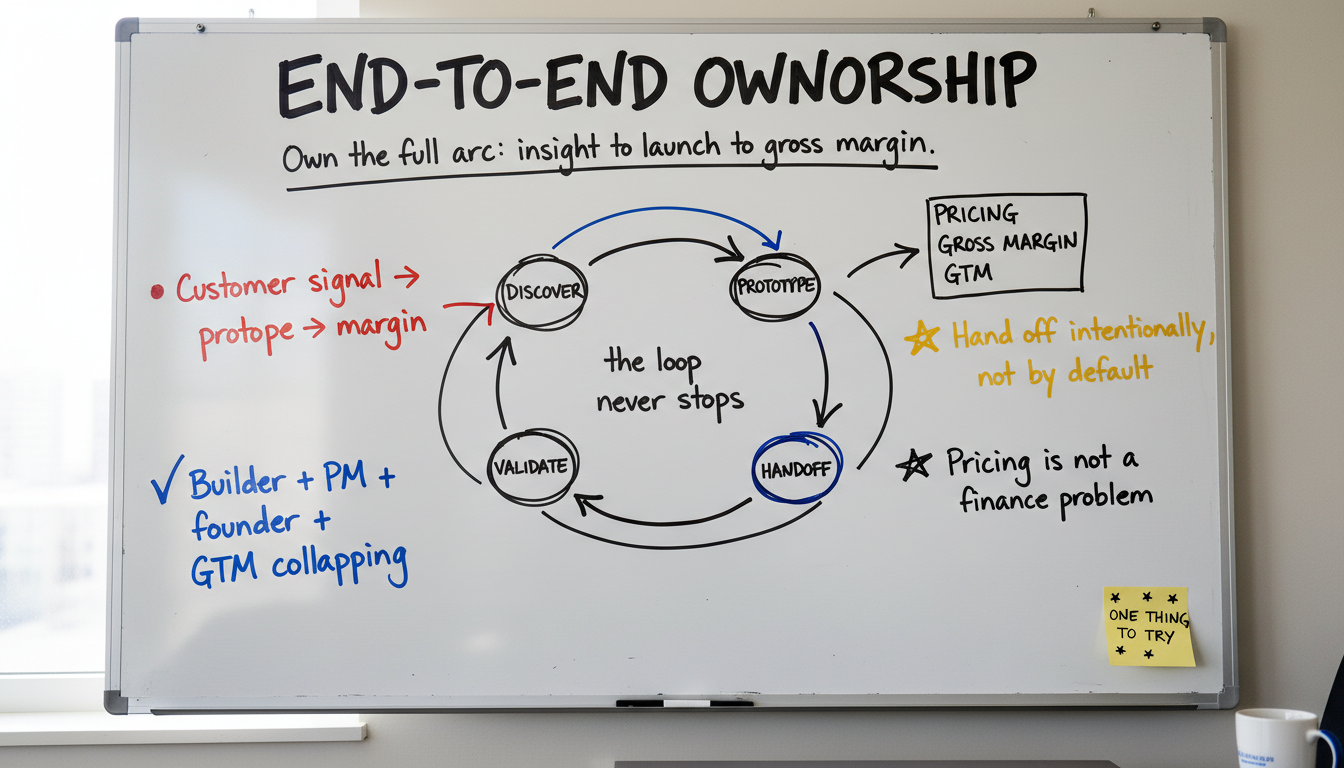

Own the full arc. Insight to prototype to launch to gross margin to second iteration.

If you can't build it in hours, you're a PM. If you can, you're a Product Builder.

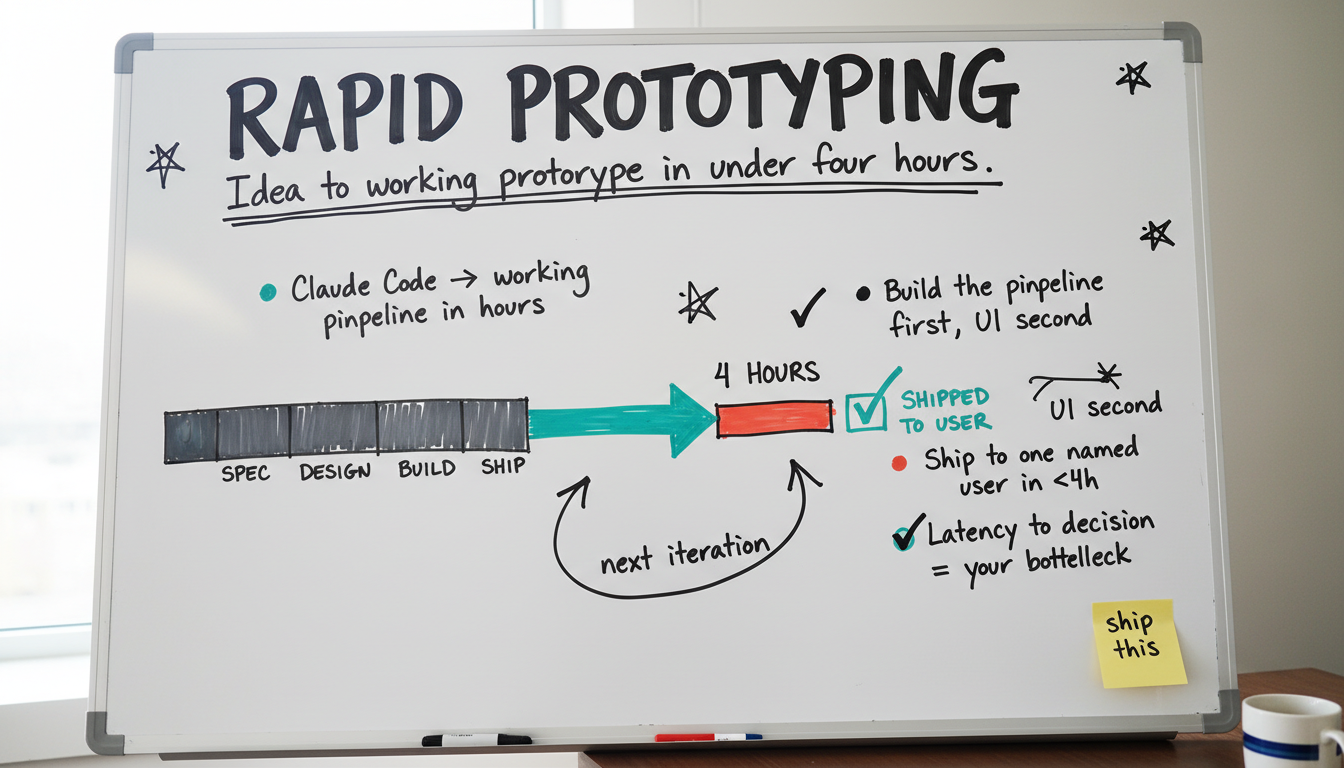

Idea to deployed prototype in under four hours, or your stack is the bottleneck. The single skill that separates a Product Builder from a Product Manager. Everything else is downstream.

This is the breakthrough skill. Everything else in the Standard is downstream of this one. When you go from idea to working prototype in hours instead of weeks, the entire product loop accelerates: discovery, validation, the engineering handoff, even how you communicate with executives. You stop arguing about specs because you can just build the thing. You stop waiting for engineering sprints because the prototype is already done before the sprint starts.

Claude Code (and the equivalents) make this possible for PMs who are not full-stack engineers. You describe what you want, iterate on it in real-time, and end up with something real enough to put in front of users. The Builder PMs hiring you in 2026 expect this skill at the floor. Without it, the title doesn't apply.

Install Claude Code (npm install -g @anthropic-ai/claude-code). Create a project folder. Wire one-command deploy with `npx vercel`. Add your ANTHROPIC_API_KEY to a .env.local. Test the whole loop with a hello-world page. Total setup time: under three hours. If yours took longer, your stack needs simplifying.

The biggest mistake is starting with the UI. Start with the pipeline — the thing that actually does the work. Ask Claude Code to build the backend logic first: input processing, AI reasoning with a specific system prompt, output formatting, action/integration. No UI yet. Make the pipeline work end-to-end with test inputs. THEN wrap it in a minimal web UI.

Deploy immediately. The goal is a URL you can share. Ask Claude Code to make it deployable with basic auth, mobile-responsive layout, error handling, and a simple feedback mechanism. Deploy with `npx vercel`. Share the URL with one named customer. Watch them use it. The feedback you get from a working prototype in four hours is worth more than four weeks of spec reviews.

Essays and handbook chapters that go further on this skill.

Why the fastest way to get alignment, test ideas, and advance your career is to build something people can touch - and exactly how to do it in 2 hours.

A concrete walkthrough of building a real prototype with AI - from problem statement to deployed, testable app. With the exact prompts and workflow.

An AI agent that turns customer feature requests into working prototypes, not PRDs. Deploys to a preview URL, opens a PR, files a Linear ticket, drafts a Notion doc.

I used to think mob programming was a productivity killer. Then I tried putting a PM, designer, and engineer in a room for one day a week to build prototypes together. It changed how my team ships.

We were about to commit a full squad to a feature for an entire quarter. A quick prototype and 5 customer calls later, we realized the premise was wrong. Here's the story.

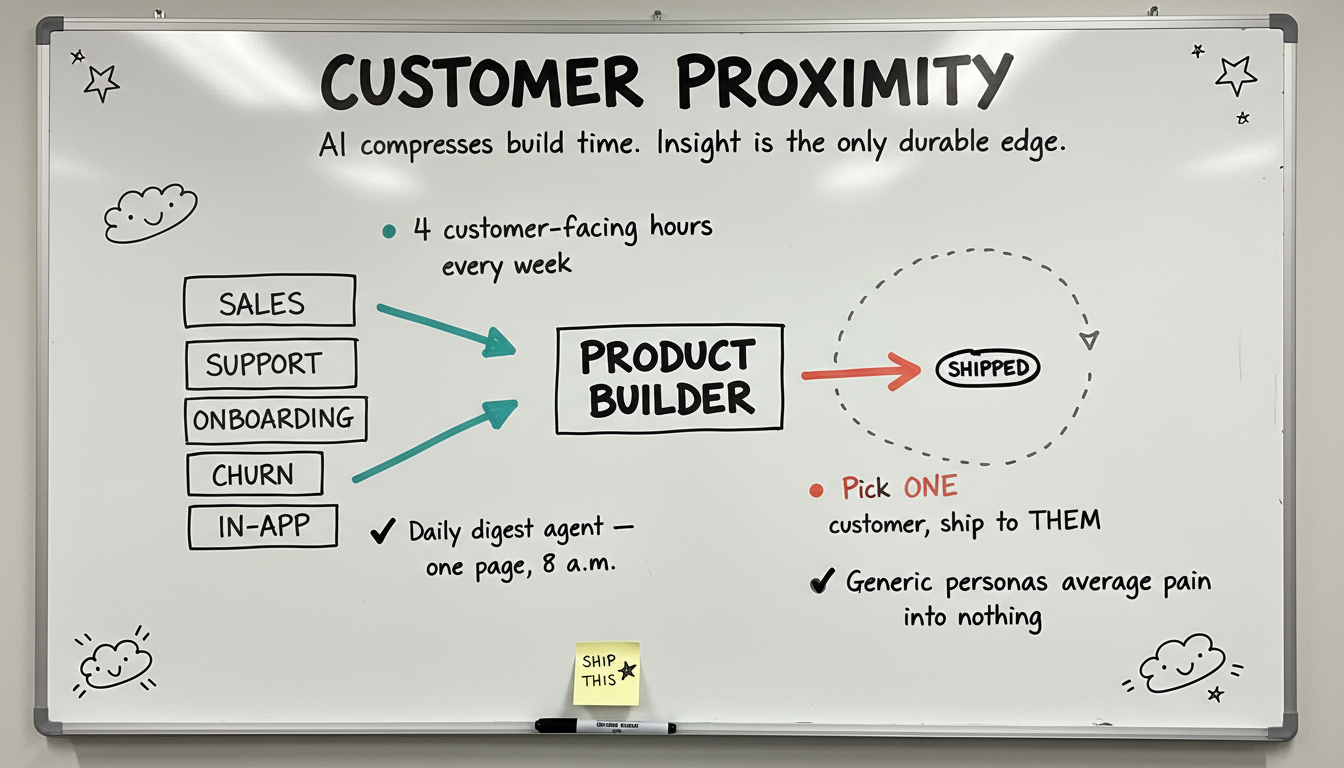

AI compresses build time toward zero. Insight is the only durable edge.

Two teams with the same Claude Code and the same Vercel stack diverge only on the insight side. The team closer to the customer wins. Customer proximity is not research — it's a Product Builder's daily oxygen.

AI compresses the build cycle toward zero, so the differentiator collapses to whoever understands the customer best. Two teams using the same Claude Code and the same Vercel stack will diverge only on the insight side. As building gets commodified, this becomes the most defensible skill in the Standard, not the least.

Customer proximity is not a research function you outsource. It is a Product Builder's daily oxygen. Sit in support queues. Take the awkward 6am sales call. Watch a real user onboard. Read the cancel reasons in raw text. Pattern-recognize across thousands of interactions until your intuition outpaces your dashboards. The PM who can't be specific about a customer named Sarah and what she said last Tuesday loses to the one who can.

Block four customer-facing hours every week. Two sales calls (one new logo, one renewal), one support shadow, one onboarding shadow. Take notes in a single doc tagged by date. After 90 days, you'll have a pattern map no dashboard reveals. Ask Claude Code: 'Read these 12 weekly notes. Cluster recurring friction by surface and severity. Flag the three signals that show up in 50%+ of weeks.'

Pipe every customer signal into one searchable corpus: support tickets, sales-call transcripts, Slack-Connect threads, churn-survey responses, in-app feedback, NPS comments. Use Claude Code to build a daily digest agent: 'Pull yesterday's signals from each source. Tag by product surface and emotion. Surface anything that crossed a threshold (>3 mentions, or strong negative emotion, or a paying customer). Slack me a one-page digest at 8 a.m.'

Each sprint, pick ONE customer who triggered a signal worth solving. Ship to them specifically. Watch them use it within 48 hours. Iterate. The shipped surface generalizes later, but the first cut must solve THIS customer's pain, named and dated. Generic personas average pain into nothing. Specific customers create the constraint that produces clarity.

Essays and handbook chapters that go further on this skill.

Discovery used to mean scheduling interviews and hoping for insights. Now AI ingests every call, email, and ticket your company generates, extracts the signal, and hands you a prototype before you finish your coffee.

Customer interviews still matter more than ever. But now you show up with full signal context, a working prototype in hand, and AI that synthesizes the conversation before you close your notebook.

Stop debating what to build. Prototype it in hours, put it in front of customers, and let their reaction be the test. Assumption testing just went from weeks to days.

Feature requests tell you what's broken. Prototypes tell you what's possible. I stopped treating user feedback as a roadmap and started using it as a starting point.

We went from 'we talk to customers quarterly' to 'every PM runs weekly discovery calls.' It wasn't a mandate - it was a system. Here's the 30-day playbook.

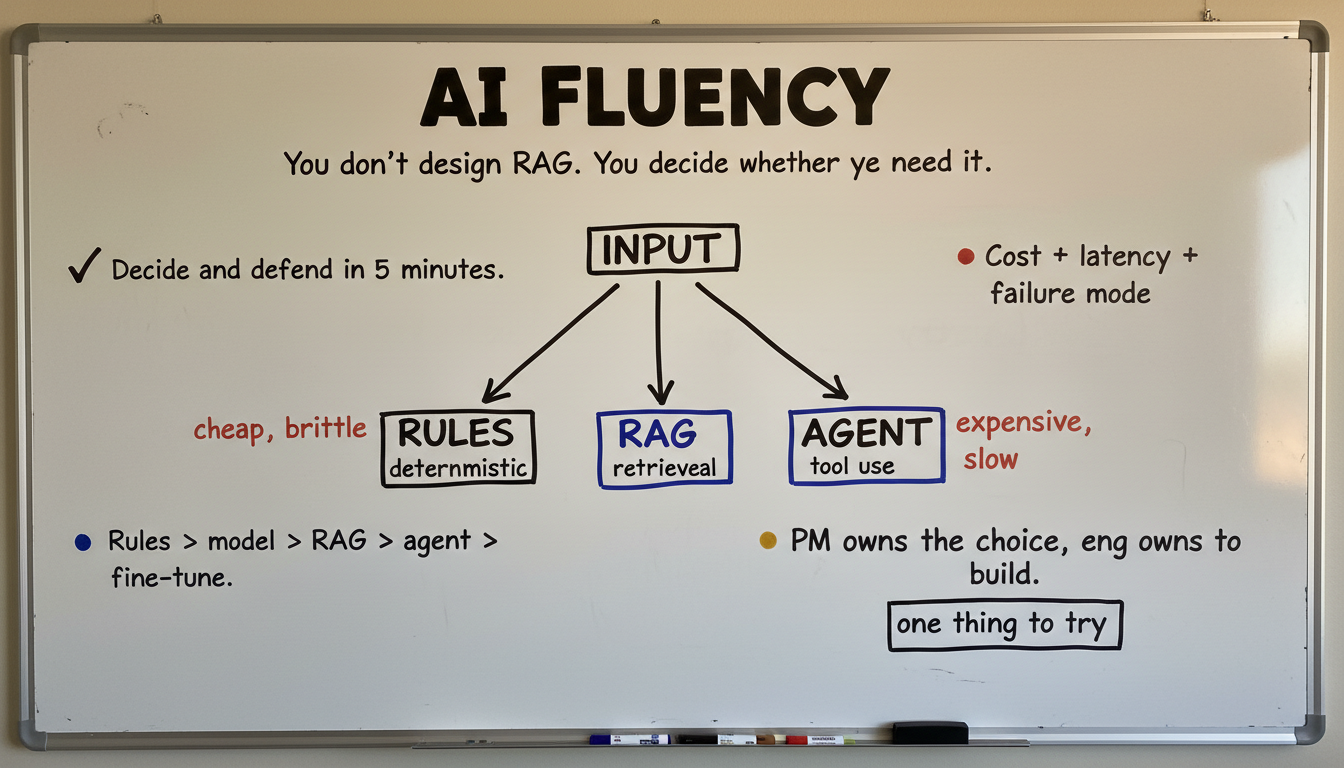

You don't design RAG. You decide whether you need it.

Know enough AI architecture to make scope, cost, and feasibility calls. Pick the right primitive (rules, model, RAG, agent + tools). Defend the choice in five minutes. Engineering still owns the implementation.

A Product Builder is not an ML engineer. You don't write retrieval pipelines, tune embeddings, or architect tool orchestration — that's engineering work. What you DO own: knowing which AI primitive applies to a problem, what the cost shape looks like, where the system will fail, and whether the scope is honest.

The wrong framing is 'design RAG.' The right framing is 'decide and defend.' AI Fluency also includes the workflow-level thinking that comes before primitive selection: where does AI fit in this chain, where's the automation boundary, what stays human. Workflow thinking is the mental model; AI primitive selection is the decision. Same skill, two faces.

Memorize the five primary primitives and when each one wins: rules (deterministic, cheap, brittle), a single model call (one-shot reasoning, predictable cost), RAG (grounded answers, sub-second latency, freshness-sensitive), an agent with tools (multi-step actions, fuzzy outcome, harder to test), and fine-tuning (only when prompt engineering caps out AND you have stable labeled data). For each, know the cost shape, latency shape, and most common failure mode.

Before engineering starts, write three lines: (1) the AI primitive you're recommending, (2) the cost and latency you expect at scale, (3) the single biggest failure mode and what monitoring will catch it. If you can't write all three, you don't have a scope yet. Use Claude Code to pressure-test: 'I'm proposing [primitive] for [use case]. Argue against this choice. What primitive would you recommend instead and why?' Iterate until you can defend in a 5-minute exec review.

Before scoping any AI feature, map the workflow it lives inside. Where's the trigger? Where's the human checkpoint? Where's the automation boundary? Ask Claude Code: 'Map the complete workflow for [process]. For each step: who does it, what input they need, what decision they make, what output they produce. Then: can AI handle this step with >90% reliability? Mark each step AUTOMATE / HUMAN_REVIEW / HYBRID.' The workflow map is what makes your primitive choice defensible.

Walk the tree. Each yes/no narrows the cheapest primitive that fits. Or skip the tree and pick directly — either path lands the same five-minute defense.

Q1Does the logic follow a fixed if/then pattern with no language understanding required?

Answer the first question to see the trade-offs.

Essays and handbook chapters that go further on this skill.

A live index of every AI agent for product managers, mapped to the 7 stages of the PM Operating System: Sense, Discover, Decide, Build, Ship, Measure, Amplify.

The senior PM move in 2026 isn't using AI everywhere. It's knowing when a regex, a query, or a form beats a model.

Your prompts are production code. Version, review, eval, stage, and roll back, or your product is one Notion edit away from breaking.

Every guardrail is a product decision. The PM who outsources it to legal gets a product they didn't design and a customer experience they wouldn't approve.

Most things marketed as AI agents are actually workflows or automations with a chatbot bolted on. Here's how to tell the difference, and why it matters for how you build.

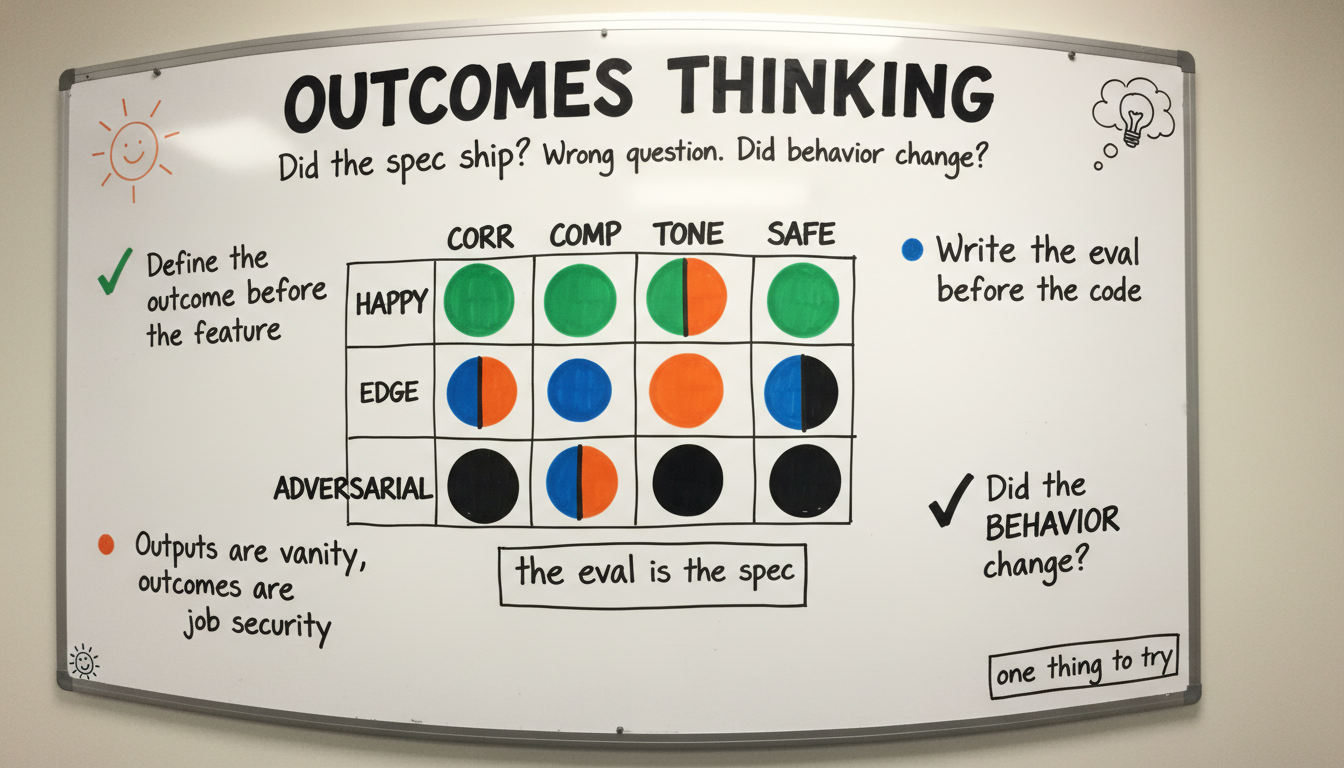

Did the spec get built? Wrong question. Did the customer's problem get solved? Right question.

Outputs are tickets shipped. Outcomes are customer behavior that changed. Measure outcomes, not features. Evals are one tool; the broader skill is articulating what 'success' means before code starts.

Output thinking is the oldest PM disease: count tickets closed, count features shipped, count specs delivered. None of those numbers prove a customer behaves differently. Outcomes thinking is the discipline of defining the behavior change you're trying to cause — and the signal that proves it — BEFORE the team starts building.

This is broader than evals. Evals are how you measure quality of an AI output. Outcomes thinking is how you measure whether the product moved the world. They overlap (the eval IS one form of an outcome contract for an AI feature), but the eval is a tool, not the skill. Teams that count tickets lose to teams that count behavior shifts. Builders who can write the success criteria in one sentence outpace the ones who write 10-page PRDs that never define what good looks like.

Before any scoping doc, write one sentence: 'This feature will cause [specific customer] to do [specific behavior] [N% more / N% faster / by date X].' If you can't write that sentence with concrete numbers and a named customer, you're not ready to build. Use Claude Code: 'Pressure-test this outcome statement. Is it specific enough to disprove? What evidence would I need to declare success or failure? What's the cheapest experiment that tests it?'

For AI features specifically: the eval is your outcome contract. Define what 'good' means in measurable terms before code is written. Build a rubric (Correctness / Completeness / Tone / Safety, 1-5 each). Generate 20 test cases covering happy path, edges, and adversarial inputs. Wire it into CI so every change runs against the rubric. The eval becomes the spec — engineering can't ship a regression without you knowing.

After launch, the dashboard answers ONE question: did the outcome change? Not 'did users click the button?' Did the behavior shift? Build a daily-running script: 'For the cohort that touched [feature] in the last 7 days, compute [outcome metric]. Compare to the matched cohort that didn't touch it. Compare to baseline before launch. Alert if the gap is below the threshold I committed to in the scoping doc.' If the dashboard can't answer that question, your outcome statement was vague.

Essays and handbook chapters that go further on this skill.

Kill the PRD. Ship against a test set. The eval is the contract, the changelog, and the definition of done.

No feature leaves staging without the traces, metrics, and evals that will tell you whether it's working. Before your first customer hits it.

Outcomes lag by weeks. Direction moves with each iteration. The seven leading indicators that predict outcomes 4-8 weeks ahead, and the dual-cadence system.

The daily rhythm that replaces sprints, stand-ups, and roadmap reviews. Sense what's happening, build a response, measure the impact, amplify what works.

Rebuild a 200-person product org around evals, not velocity. The Quality Spine, Product Builder pods, the Economics Unit, the Discovery Network. The 90-day rebuild plan.

Great products lose to mediocre products with better stories.

If you can't explain it, position it, and put it where customers find it, you didn't ship it — you built it. Modern Product Builders own the launch narrative AND the distribution motion, not just delivery.

The market is noisy. AI has commodified building, which means hundreds of teams are shipping similar features. The differentiator collapses to whoever can tell the clearest story and put it in front of the right audience first. Great products with no story lose to mediocre products with a great story — every month, in every category.

This is two skills bolted together because they fail together. Storytelling without distribution is a journal entry no one reads. Distribution without a story is spam. Modern Product Builders own both: they write the launch tweet before the feature, they pick the channels and own the calendar, they show up in the LinkedIn comment section themselves. The line between builder, PM, founder, and GTM is collapsing. Product Builders who only own delivery are owning a shrinking slice of the work that matters.

Before any feature is built, write the one-tweet announcement (280 chars max). Force yourself to name the customer pain in plain English, the change the feature creates, and one concrete number. If you can't write the tweet, you can't write the spec — the work isn't crisp enough yet. Use Claude Code: 'Here's the feature I'm scoping. Draft five candidate launch tweets at different angles (problem-first, contrarian, customer-quote, before/after, big-number). Which one would you bet on?' Iterate until one lands.

Marketing pages, sales decks, onboarding tooltips — all downstream of the demo. The demo is the single artifact that has to land in 90 seconds. Record a Loom in two takes. No edits. Watch yourself back. If you wouldn't reshare it, the feature isn't shippable yet. Replace any product copy with the demo whenever possible. Demos travel; docs don't.

Don't hand distribution to GTM until you've personally placed the feature in front of the first 100 customers and watched what works. Pick three channels (LinkedIn post, one-to-one DM, customer Slack-Connect ping). Run each daily for a week. Track who replied and what landed. The channel patterns you discover become the brief for whoever picks up GTM long-term. The Product Builder who skips this step ships features no one finds.

Essays and handbook chapters that go further on this skill.

Your model vendor changed the model on Tuesday and didn't tell you. Run a daily replay against production or your customers will catch it before you do.

Gross margin compression, NRR redefinition, the new rule of 40, the right comp set, and the four CFO sub-agreements that make the trough boring.

The annual strategy deck is a memorial to a meeting. Run a one-page living strategy doc, updated weekly with the signals that could change your beliefs.

Product Requirements Documents were a necessary evil in a world where building was expensive. That world is gone.

The exact one-page template we use instead of PRDs. Covers problem, evidence, solution, metrics, and risks - nothing else.

Own the full arc. Insight to prototype to launch to gross margin to second iteration.

The meta-skill that ties the other five together. Owning the whole arc — including the parts that used to live in finance, ops, support, and leadership — is what separates a Product Builder from a feature owner.

The other five skills are what a Product Builder DOES. This one is how they HOLD it. The capability to keep the full arc in your head — insight feeding prototype, prototype meeting customer, customer signal updating the next ship, gross margin and pricing tracked alongside adoption, the org rallying behind the launch story — is the meta-skill that makes the rest reinforce instead of fragment.

For most of PM history, this was theoretical. Real PMs lived inside one of the slices (discovery OR delivery, IC OR manager, feature OR pricing). The AI-compressed cycle makes the slice obsolete. The market rewards builders who close the whole loop themselves: from the customer signal through the prototype through the launch through the gross margin conversation with finance through the hire that scales it. Product Builders who only own delivery own a shrinking slice; the ones who own the whole arc define the role.

Once a quarter, write the full-arc map for your current product: customer signal → prototype → ship → launch → gross-margin trajectory → next-iteration hypothesis. Each arrow names the specific evidence that lets you move to the next stage. Use Claude Code to pressure-test: 'Here's my full-arc map for [product]. Where's the weakest link? Which arrow is wishful thinking instead of evidence-backed?' The map is the contract you hold yourself to.

Pricing is not a finance problem. For AI products especially, the cost-per-request determines whether the feature is even viable. Compute the unit economics before the engineering investment is locked in. Defend the pricing model in the same review as the launch plan. If you don't, someone else does it badly, and the feature is in market with the wrong number on it.

Some work scales by handing it off; some breaks. Distribution scales (eventually) to GTM. Customer proximity stays yours forever. Pricing migration partners with finance, but you keep the customer narrative. Evals stay yours until the next PM takes over the surface. The skill is knowing which handoffs to make when. The default handoff (everything goes to someone else after launch) creates the fragmented-ownership disease that defined PM in 2018. Resist.

Essays and handbook chapters that go further on this skill.

Cost per successful action is the new primary PM metric. If you don't own it, your CFO will kill your product before your customers do.

Per-seat is dead for AI. Price the work the seat is no longer doing: outcomes, usage, value units.

The 39-agent chapter told you what to deploy. This is the skill you need once they're running: great editing at volume.

The 90-day plan for making the shift from traditional PM to product builder. Done in order. In 90 days, you have a different job.

A complete, fork-ready job-description ladder for Builder PMs. Four levels calibrated to scale from your first Builder hire to your most senior IC. Each level downloadable as its own file.

The PM job that existed in 2023 and the Product Builder job that exists in 2026 are not the same job. Ten axes, side by side.

Ten-page PRD

A working prototype + a 30-pair eval set

Signal → ship: 6–12 weeks

Signal → ship to one customer: 72 hours

Did the spec get built?

Did this customer's problem get solved?

PM uses Figma, Notion, Jira

PM uses Claude Code, the real codebase, the real design system

Frameworks, decks, roadmap stories

Pull requests merged · prototypes shipped · evals passed

PM writes spec → eng decodes wishes

PM hands eng a working surface; eng scales it

Generalize from interviews

Ship to the specific customer who triggered the signal

Avoid wrong specs

Kill bad bets faster · accept wrong prototypes as the cost of speed

Product · Design · Eng silos

Product builders embedded with eng review; design-as-system

L4 → L5 → L6 via scope

L4 → L5 → L6 via shipped surfaces and merged PRs

L4 to Principal. Four JDs you can fork today, one per rung. Same discipline, escalating scope.

Ships a working prototype to a real user in their first week. Earns trust by closing tickets, not opening them.

Owns one product surface end to end. Has evals running in production. Can defend cost-per-request to finance.

Operates across two or more surfaces. Sets the standards juniors copy. Their prototypes regularly become roadmap.

Defines the org's AI architecture taste. Makes the bets the CPO didn't know to ask about. Owns the bar.

The biggest shift: a Product Builder is no longer just managing software delivery. They are actively building, testing, orchestrating AI, shaping workflows, validating markets, and shipping outcomes continuously. Twelve principles describe how.

Long planning cycles are dead in most software categories. A Product Builder ships constantly, learns from reality, and adjusts fast.

The product itself becomes the research engine. Speed of learning matters more than perfection.

You cannot fully outsource understanding anymore. Modern Product Builders prototype themselves, use AI coding tools, wire workflows together, test UX directly, analyze data themselves, prompt models, create internal tools.

You do not need to be a staff engineer. But you absolutely need builder capability.

Nobody cares how many tickets were closed. A Product Builder obsesses over adoption, retention, activation, conversion, time-to-value, customer behavior changes, business impact.

Features are only temporary assumptions.

Every workflow should trigger the question: why is a human doing this manually? Product Builders think in agents, orchestration, automation, augmentation, continuous learning loops, workflow compression.

They redesign workflows, not just screens.

This is not gut feeling. Strong intuition comes from deep customer exposure, pattern recognition, market timing, shipping velocity, seeing thousands of product interactions.

The best Product Builders make decisions with incomplete data because they operate inside tight learning loops.

Big-bang releases create blindness. Modern builders release small, instrument everything, validate quickly, stack learning, improve continuously.

The best products today evolve almost daily.

AI can generate code. It cannot replace deep human understanding of pain, emotion, workflow friction, politics, or buying behavior. The best Product Builders stay extremely close to customers, support, sales calls, onboarding, churn, implementation pain, user behavior.

This is where the real advantage comes from.

The role is no longer isolated PM work. A Product Builder operates across engineering, design, GTM, AI, support, analytics, operations.

They connect dots fast and remove friction across the system.

The market is noisy. The ability to frame problems, explain the future, create belief, align teams, communicate urgency, simplify complexity is now a massive competitive advantage.

Great Product Builders are great storytellers.

Product Builders do not hide behind dependencies, process, org charts, Jira, research delays, engineering constraints.

They find a way forward. Even if imperfect.

Building is no longer enough. Modern Product Builders understand positioning, social distribution, LinkedIn narrative building, community, GTM motion, product-led growth.

The line between builder, PM, founder, and GTM is collapsing.

AI changed customer expectations permanently. Products now need to learn, adapt, personalize, improve continuously, evolve with workflows.

Static software feels broken now. That is why the old 'requirements manager' version of product management is collapsing so fast.

Six skills, four-rung ladder, twelve principles. Published May 11, 2026.

Actively maintained as the role evolves. Errata are accepted via email or LinkedIn DM.

Planned for 2027. Expected additions: model evaluation depth, org-design patterns, retrieval architectures.

None reported as of May 11, 2026. Found something to fix? Contact the editor →

The questions I get asked most often after someone reads the standard.

A Product Builder is a product manager redefined for the AI era. Instead of writing specs, running translation meetings, and managing backlog tickets, Product Builders prototype in hours with tools like Claude Code, design workflows end-to-end, reason about AI system architecture, and ship working software alongside engineering. The title reflects the shift from describing products to building them.

A traditional PM's core output is a document, a PRD, a spec, a roadmap slide. A Product Builder's core output is a working prototype. PMs translate between business and engineering; Product Builders remove the translation step by building the thing themselves. PMs optimize features; Product Builders design complete AI workflows from input to action. The job is the same customer-value job; the medium changed.

Six skills define Product Builder OS, the working standard for the Product Builder role, ordered by what differentiates a Product Builder from a traditional Product Manager. Rapid Prototyping: idea to working prototype in under four hours using Claude Code. Customer Proximity: staying close to customers, support, churn, onboarding daily, not quarterly. AI Fluency: deciding which AI primitive (rules, RAG, agents, fine-tuning) fits a workflow, plus the workflow thinking that surrounds the choice. Outcomes Thinking: defining the customer behavior change you'll cause and the eval that proves it, before code is written. Storytelling & Distribution: writing the launch tweet before the spec, running the first 100 customers' rollout yourself. End-to-End Ownership: from insight through prototype through launch through gross margin.

No. They change the handoff. Engineers still own production systems, scale, reliability, and security. Product Builders ship the prototype that proves the idea works, then hand it to engineering with real user feedback, validated architecture decisions, and a clear list of what still needs hardening. Engineers get to build the right thing the first time instead of rebuilding from speculation.

Start with one skill and one tool. Install Claude Code. Pick a real workflow in your current product, something manual that should be automated, and build a working prototype of it end-to-end in a single afternoon. Ship that prototype to one customer. Get their feedback. Iterate. Do that every week. The gap between describing products and building them closes the fourth time you ship something users actually touch.

Claude Code for prototyping and agent work. Cursor or Claude Desktop for pair-programming. Vercel or Railway for one-command deploys. A simple observability stack (logs plus one eval harness). Opportunity Solution Trees for discovery. The stack is smaller than most PMs expect, the goal is idea-to-deployed-prototype in under four hours. If your stack can't do that, simplify it.

AI is replacing the translation layer of the PM role, the meetings that restate requirements, the specs that get rewritten into tickets, the status reports that rearrange the same facts. That work is automatable. What AI cannot replace is customer judgment, opinionated prioritization under uncertainty, and taste. Product Builders are PMs who invested in the parts AI cannot do and let AI do the rest.

Product Builder OS is the working standard for the Product Builder role. It defines, in one document, what the role is, what the role isn't, the six skills that constitute it, the career ladder that supports it, and the principles that govern it. Product Builder OS is the 2026 Edition; PB-2 is planned for 2027 as the role evolves and new skills become load-bearing. The standard is edited by Falk Gottlob.

Pick one skill above. Open Claude Code. Build something real in the next four hours. That is how the gap closes.